How Facebook Intentionally Manipulated 689,003 Users' Emotions Without Their Knowledge

There may be more than just metrics determining which posts make it onto your feed.

Facebook is coming under some serious fire today for a mood study it conducted back in 2012. Over at the Atlantic, Robinson Meyer explains what it was all about:

"For one week in January 2012, data scientists skewed what almost 700,000 Facebook users saw when they logged into its service. Some people were shown content with a preponderance of happy and positive words; some were shown content analyzed as sadder than average. And when the week was over, these manipulated users were more likely to post either especially positive or negative words themselves."

The results were logged and analyzed for a study on "emotional contagion" released in the Proceedings of the National Academy of Sciences. Facebook users had no idea.

Many previous studies have used Facebook data to examine “emotional contagion,” as this one did. This study is different because, while other studies have observed Facebook user data, this one set out to manipulate it.

Though it was blessed as legal, the question now is whether it was ethical?

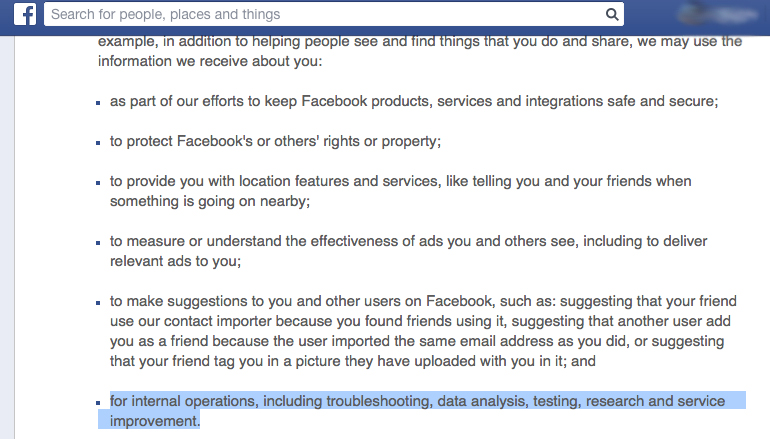

The experiment is almost certainly legal. In the company’s current terms of service, Facebook users relinquish the their data “data analysis, testing, [and] research.” Is it ethical, though? Since news of the study first emerged, we’ve seen and heard both privacy advocates and casual users express surprise at the audacity of the experiment.

Even Susan Fiske, the editor of the study, had some serious concerns about it

"People are supposed to be, under most circumstances, told that they're going to be participants in research and then agree to it and have the option not to agree to it without penalty," writes Adrienne LaFrance.

So what did this experiment actually discover?

The study found that by manipulating the News Feeds displayed to 689,003 Facebook users users, it could affect the content which those users posted to Facebook. More negative News Feeds led to more negative status messages, as more positive News Feeds led to positive statuses. As far as the study was concerned, this meant that it had shown “that emotional states can be transferred to others via emotional contagion, leading people to experience the same emotions without their awareness.”

It touts that this emotional contagion can be achieved without “direct interaction between people” (because the unwitting subjects were only seeing each others’ News Feeds). The researchers add that never during the experiment could they read individual users’ posts.

There are two interesting things that sticks out in this study

The first? The effect the study documents is very small, as little as one-tenth of a percent of an observed change. That doesn't mean it’s unimportant, though, as the authors add: Given the massive scale of social networks such as Facebook, even small effects can have large aggregated consequences. […] After all, an effect size of d = 0.001 at Facebook’s scale is not negligible: In early 2013, this would have corresponded to hundreds of thousands of emotion expressions in status updates per day.

The second was this line: Omitting emotional content reduced the amount of words the person subsequently produced, both when positivity was reduced (z = −4.78, P < 0.001) and when negativity was reduced (z = −7.219, P < 0.001). In other words, when researchers reduced the appearance of either positive or negative sentiments in people’s News Feeds—when the feeds just got generally less emotional—those people stopped writing so many words on Facebook. Make people’s feeds blander and they stop typing things into Facebook.

Was the study well designed?

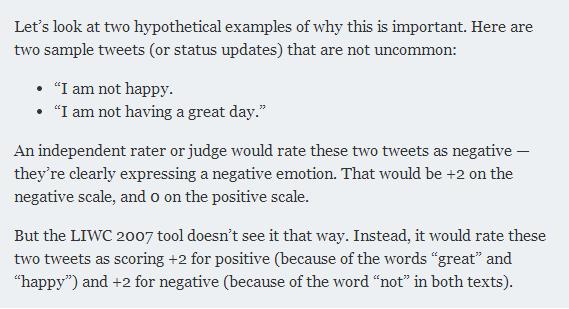

Perhaps not, says John Grohol, the founder of psychology website Psych Central. Grohol believes the study’s methods are hampered by the misuse of tools: Software better matched to analyze novels and essays, he says, is being applied toward the much shorter texts on social networks.

“What the Facebook researchers clearly show,” writes Grohol, “is that they put too much faith in the tools they’re using without understanding — and discussing — the tools’ significant limitations.”

Did an institutional review board, an independent ethics committee that vets research that involves humans, approve the experiment?

Yes, according to Susan Fiske, the Princeton University psychology professor who edited the study for publication. “I was concerned,” Fiske told The Atlantic, “until I queried the authors and they said their local institutional review board had approved it—and apparently on the grounds that Facebook apparently manipulates people's News Feeds all the time.”

princeton.eduFiske added that she didn’t want the “the originality of the research” to be lost, but called the experiment “an open ethical question.” “It's ethically okay from the regulations perspective, but ethics are kind of social decisions. There's not an absolute answer. And so the level of outrage that appears to be happening suggests that maybe it shouldn't have been done...I'm still thinking about it and I'm a little creeped out, too.”

Were the experiment's subjects able to provide informed consent?

Facebook’s Data Use Policy permits the company to conduct experiments on users by relying on the term “research” in the Data Use Policy.

Image via forbes.comIn its ethical principles and code of conduct, the American Psychological Association (APA) defines informed consent like this: When psychologists conduct research or provide assessment, therapy, counseling, or consulting services in person or via electronic transmission or other forms of communication, they obtain the informed consent of the individual or individuals using language that is reasonably understandable to that person or persons except when conducting such activities without consent is mandated by law or governmental regulation or as otherwise provided in this Ethics Code.

forbes.comAs mentioned above, the research seems to have been carried out under Facebook’s extensive terms of service. The company’s current data use policy, which governs exactly how it may use users’ data, runs to more than 9,000 words. Does that constitute “language that is reasonably understandable”?

The APA has further guidelines for so-called “deceptive research” like this, where the real purpose of the research can’t be made available to participants during research. The last of these guidelines is: Psychologists explain any deception that is an integral feature of the design and conduct of an experiment to participants as early as is feasible, preferably at the conclusion of their participation, but no later than at the conclusion of the data collection, and permit participants to withdraw their data.

apa.orgAt the end of the experiment, did Facebook tell the user-subjects that their News Feeds had been altered for the sake of research? If so, the study never mentions it.

James Grimmelmann, a law professor at the University of Maryland, believes the study did not secure informed consent. And he adds that Facebook fails even its own standards, which are lower than that of the academy: A stronger reason is that even when Facebook manipulates our News Feeds to sell us things, it is supposed—legally and ethically—to meet certain minimal standards. Anything on Facebook that is actually an ad is labelled as such (even if not always clearly.) This study failed even that test, and for a particularly unappealing research goal: We wanted to see if we could make you feel bad without you noticing. We succeeded.

laboratorium.netDo these kind of News Feed tweaks happen at other times?

At any one time, Facebook said last year, there were on average 1,500 pieces of content that could show up in your News Feed. The company uses an algorithm to determine what to display and what to hide. It talks about this algorithm very rarely, but we know it’s very powerful. Last year, the company changed News Feed to surface more news stories. Websites like BuzzFeed and Upworthy proceeded to see record-busting numbers of visitors.

So we know it happens. Consider Fiske’s explanation of the research ethics here—the study was approved “on the grounds that Facebook apparently manipulates people's News Feeds all the time.” And consider also that from this study alone Facebook knows at least one knob to tweak to get users to post more words on Facebook.

Over the course of the study, it appears, Facebook made some of us happier or sadder than we would otherwise have been. Now it's made all of us more mistrustful.